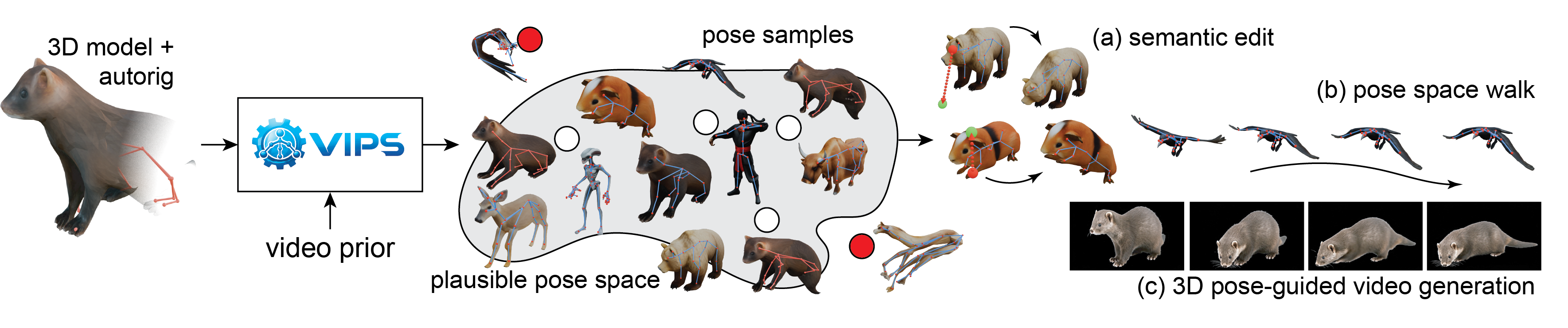

Overview

We first build a dataset of rigged meshes and plausible skeleton poses by combining video priors, per-frame 3D reconstruction, skeleton extraction, and pose optimization. We then train a diffusion model conditioned on the mesh and skeleton to model the distribution of plausible poses. At inference, we sample from the model, invert poses with DDIM inversion, and apply sparse constraints through guidance.

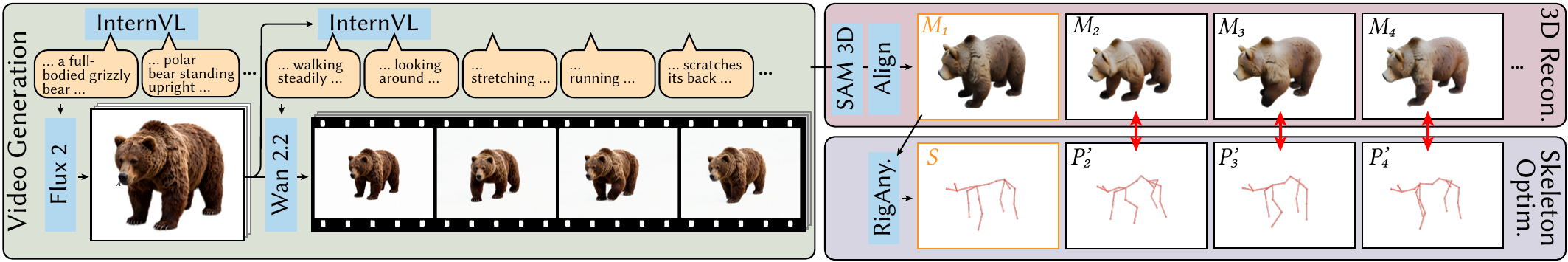

Rigged mesh poses from a video prior. We generate single-object videos with image-to-video priors, reconstruct per-frame meshes and align them in a common world space, then extract a skeleton from the first frame and optimize node positions to match each frame using Chamfer distance and edge-length regularization.

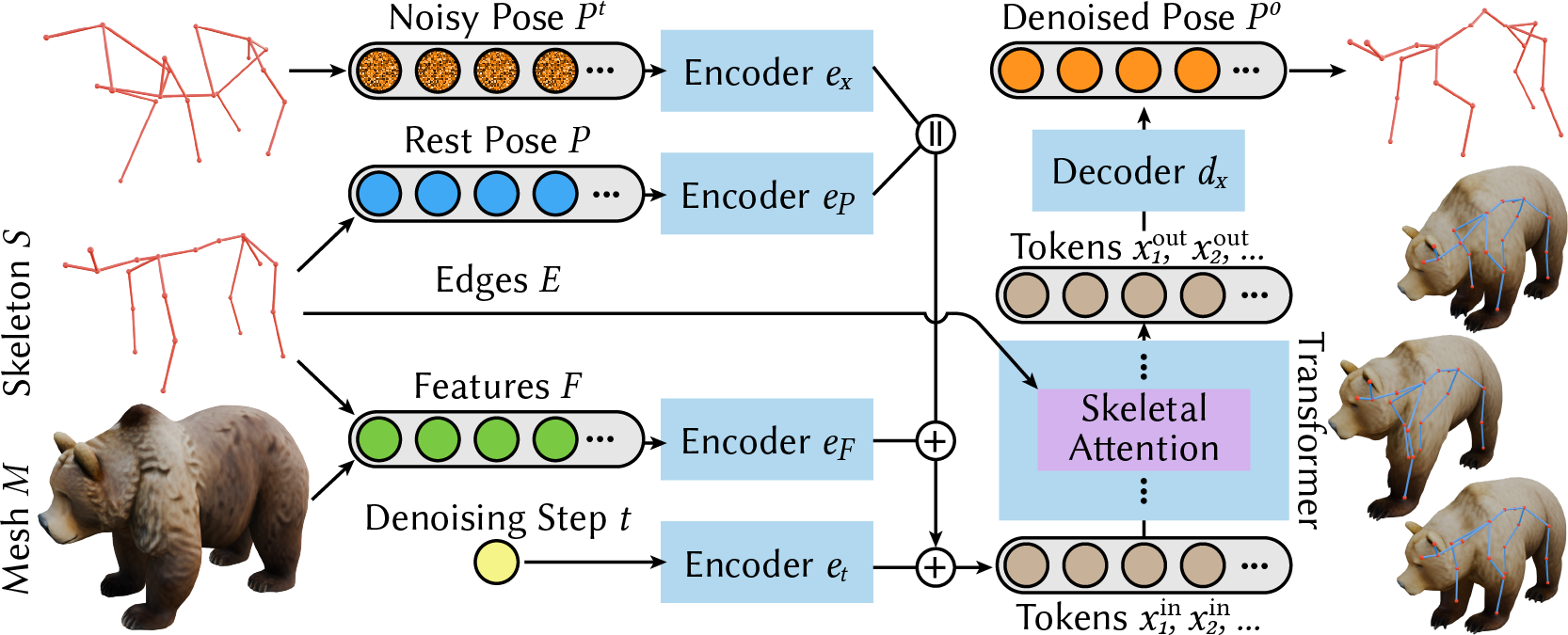

Learning a pose space. We train a diffusion model to denoise poses conditioned on the mesh, skeleton edges, rest pose, and semantic node features. Sampling uses a standard denoising schedule.

Constrained sampling. We reproduce a pose via DDIM inversion into a noise sample, and apply sparse constraints by nudging the denoising trajectory using an energy term.

All examples below are from assets unseen during training, using only the rest mesh and its auto-rig as input.

Qualitative Comparisons

Pose Walks

To visualize the continuity of the learned pose space, we generate pose-space traversals by interpolating in latent noise space and decoding each intermediate step. We first obtain the noisy latent xT for the start and end poses using deterministic DDIM inversion (η = 0), then interpolate between them using variance-preserving interpolation, and decode each step with DDIM. This produces smooth, semantically meaningful transitions that remain on the learned manifold, in contrast to direct interpolation in joint space.

Semantic Pose Editing

ViPS enables precise inverse kinematics by projecting user-driven joint handles (orange → green) into the discovered plausible pose space. It generates poses that remain faithful to the learned prior while approximately satisfying sparse user constraints through guided sampling, where an energy function measures constraint violation and nudges each denoising step toward lower energy.

Controllable Video Generation

Our pose space provides a simple interface for generating keyframes that can steer a video diffusion model. We select keyframes along a pose-space traversal (or between edits), render the corresponding mesh+skeleton proxy, and supply these as conditioning frames. This enables controllable, semantically aligned video generation: the video model is free to synthesize appearance and texture, while the pose sequence provides precise 3D control.

Data Pipeline

We introduce a high-quality 4D motion dataset with correspondence, containing 127k poses spanning 100+ species and 200+ unique individuals built from generative video priors with VLM guidance and 4D reconstruction. The dataset will be released upon acceptance.

Limitations

Our method is still constrained by the input auto-rig, so issues such as poor bone placement, inaccurate skinning weights, or suboptimal topology can directly limit the learned pose space. It also inherits biases from the video prior, which may struggle with rare motions, unusual species, or non-biological objects. More broadly, the current framework models plausible static articulations rather than full motion dynamics, and it does not yet capture more complex deformation behaviors such as soft materials, topology changes, or secondary physical effects.

BibTeX

@article{chen2025vips,

title = {ViPS: Video-informed Pose Spaces for Auto-Rigged Meshes},

author = {Chen, Honglin and Pandey, Karran and Wu, Rundi and Gadelha, Matheus and Hold-Geoffroy, Yannick and Tewari, Ayush and Mitra, Niloy J. and Zheng, Changxi and Guerrero, Paul},

journal = {arXiv preprint arXiv:2026.XXXXX},

year = {2026}

}